Welcome to the ALR-Lab

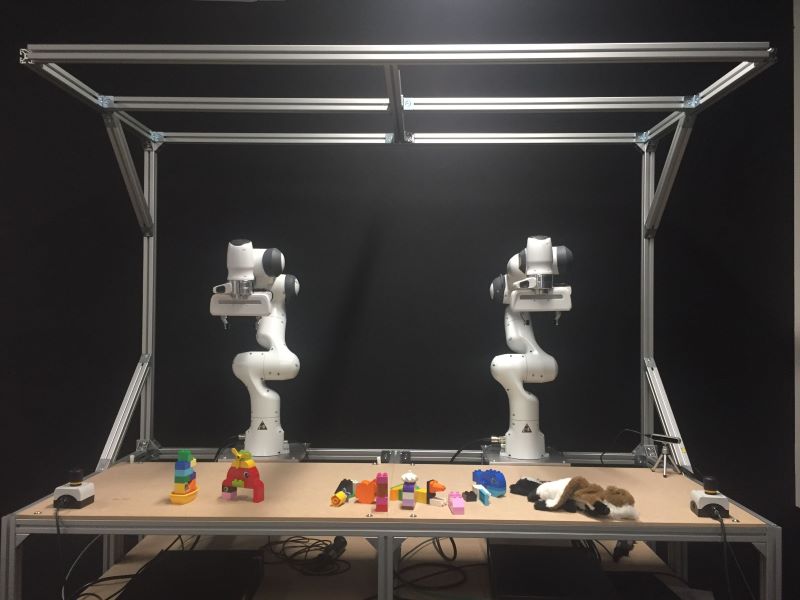

The Autonomous Learning Robots (ALR) Lab at the Institute for Anthropomatics and Robotics ![]() of the Department of Informatics

of the Department of Informatics ![]() , focuses on the development of novel machine learning methods for robotics. Future robot technology will have to deal with very challenging real world scenarios that are quite different from the lab environments typically considered in robotics research. Real world environments are unknown and unstructured, consisting of objects of unpredictable shapes or even other, unknown agents such as humans. The robot can encounter so many different situations while interacting with such environments that pre-programming such tasks seems to be infeasible.

, focuses on the development of novel machine learning methods for robotics. Future robot technology will have to deal with very challenging real world scenarios that are quite different from the lab environments typically considered in robotics research. Real world environments are unknown and unstructured, consisting of objects of unpredictable shapes or even other, unknown agents such as humans. The robot can encounter so many different situations while interacting with such environments that pre-programming such tasks seems to be infeasible.

Our research is focused on the intersection of machine learning, robotics, human-robot interaction and computer vision. Our goal is to create data-efficient and mathematically principled machine learning algorithms that are suitable for complex robot domains such as grasping and manipulation, forceful interactions or dynamic motor tasks. In our research, we always aim for a strong theoretical basis for our developed algorithms which are derived from first order principles. In terms of methods, our work is focused on:

- Movement Representations

- Reinforcement Learning and Policy Search

- Imitation Learning and Interactive Learning

- Model-Learning

- Perception

While we thrive to extend the state of the art for each of these areas of machine learning, our vision is to create an orchestration of these methods in order to develop a fully autonomous learning robotics system.

News:

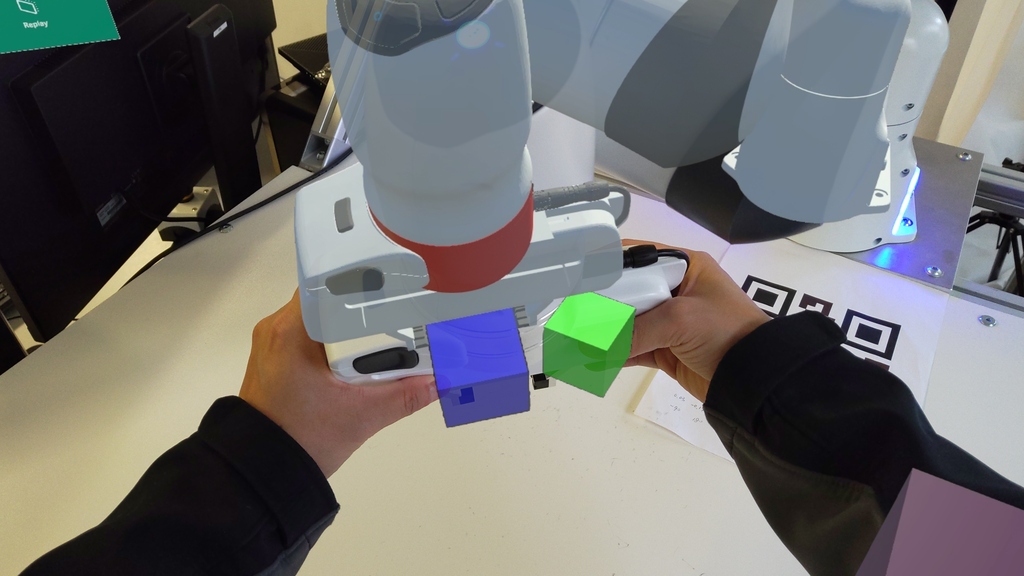

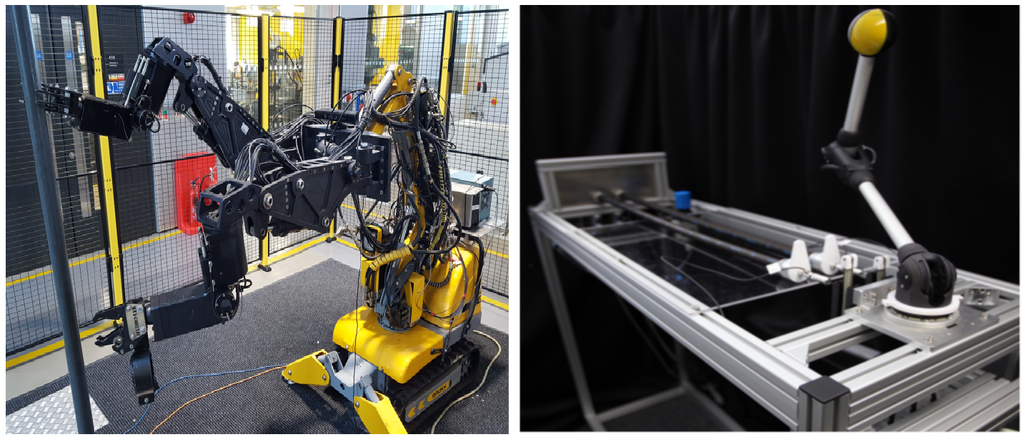

In this paper, we conducted a comprehensive user study to compare five different robot control interfaces for robot learning. This study reveals valuable insights into their usability and effectiveness. The result shows that the proposed Kinesthetic Teaching interface significantly outperforms other interfaces in both objective and subjective metrics based on success rate, task completeness, and completion time and User Experience Questionnaires (UEQ+).

more

Now you can find us on the 4th floor of the InformatiKOM I, Building 50.19, 76131 Karlsruhe.

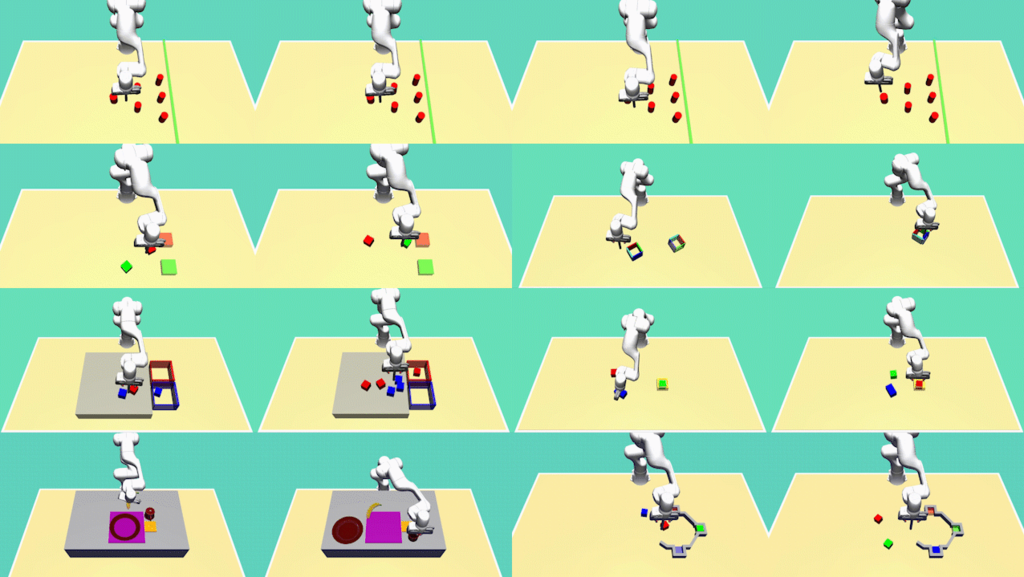

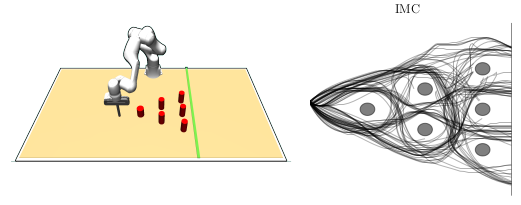

We introduce simulation benchmark environments and the corresponding Datasets with Diverse human Demonstrations for Imitation Learning (D3IL), designed explicitly to evaluate a model's ability to learn multi-modal behavior

more

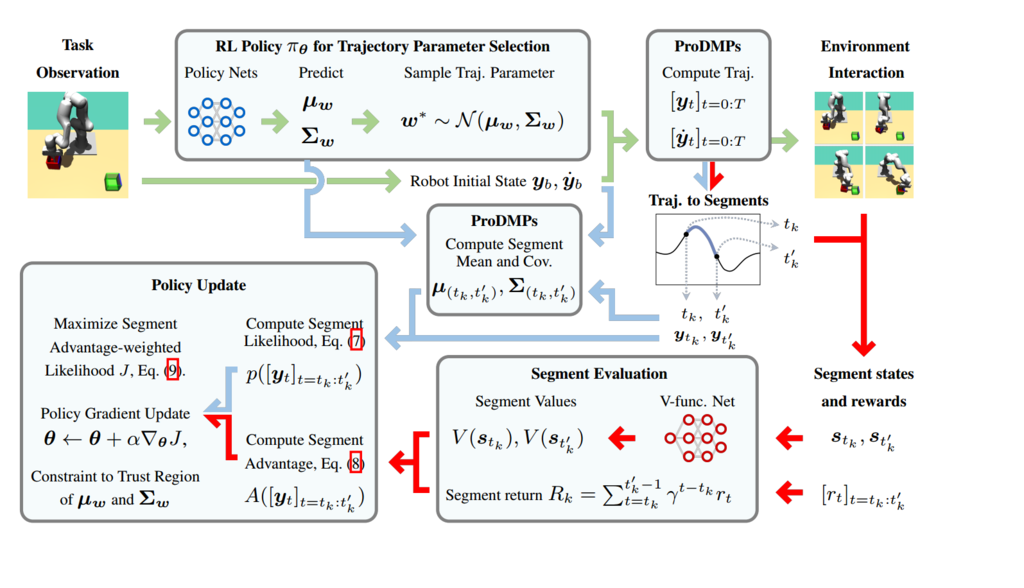

We propose a novel RL framework that integrates step-based information into the policy updates of Episodic RL, while preserving the broad exploration scope, movement correlation modeling and trajectory smoothness.

more

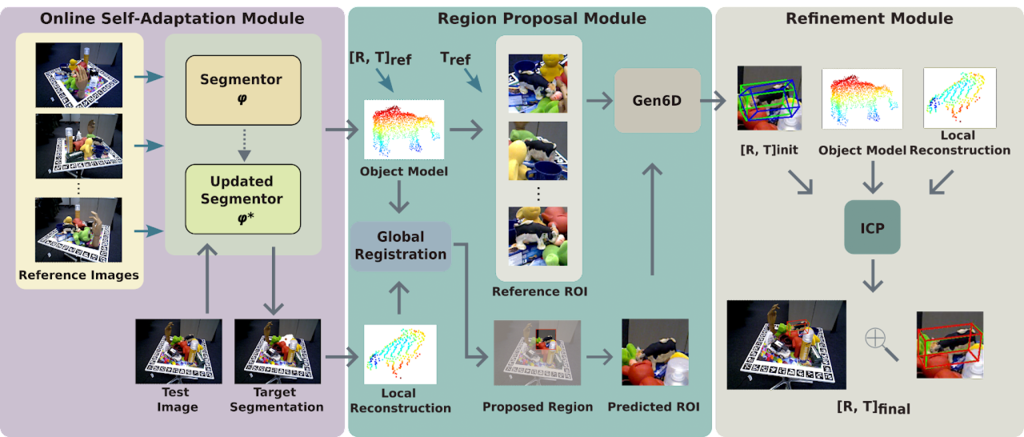

Interested in 6D pose estimation of novel objects w/o mesh models and gt-masks from clutter scenes? Check out our #CORL2023 paper "SA6D: Self-Adaptive Few-Shot 6D Pose Estimator for Novel and Occluded Objects"

more

Need a principled foundational formalism for designing hierarchical world models? Check out our new paper, where we propose a probabilistic formalism and neural network architecture for learning world models at multiple temporal abstractions/hierarchies. These lightweight latent linear models can compete with state-of-the-art transformers in predictions and additionally quantify uncertainties.

more

Imitation learning from diverse human demonstrations can lead to multimodal data distributions. We propose a new algorithm that combines curriculum learning and mixture of experts policies to avoid mode-averaging and learn diverse behavior.

more

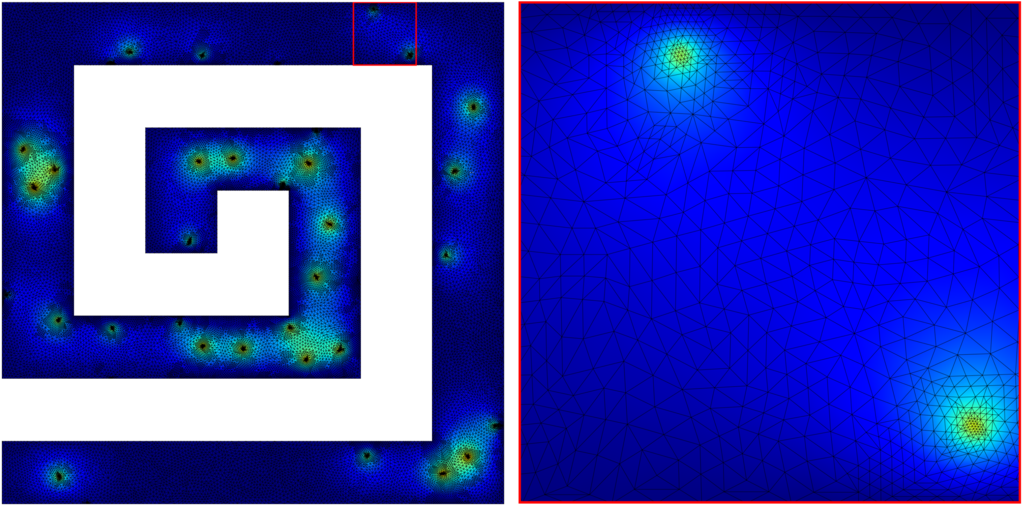

Feel the "tingle" of optimized simulations with our novel algorithm, Autonomous Sensory Meridian Response Adaptive Swarm Mesh Refinement (ASMR). By using a network of intelligent agents within the mesh, our method dramatically improves both computational speed and accuracy. ASMR offers scalable and efficient refinements, beats common baselines and performs on par with expensive error-based strategies, while operating far more efficiently.

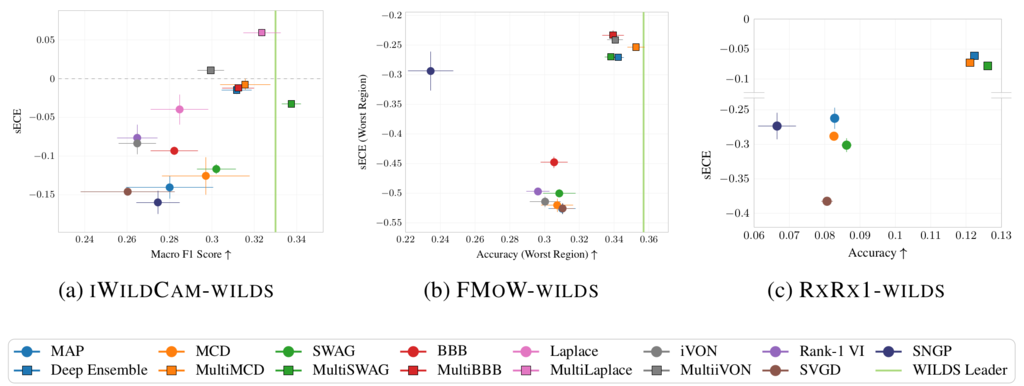

Bayesian deep learning (BDL) offers better-calibrated predictions on distribution-shifted data. We present a large-scale survey to assess modern BDL techniques using the WILDS collection's real-world datasets, emphasizing their ability to generalize and calibrate under distribution shifts across diverse neural network architectures. Our study includes the first systematic BDL evaluation for finetuning large pre-trained models, a novel calibration metric that allows distinguishing over- from underconfident predictions, and a wide range of convolutional and transformer-based neural networks. Interestingly, ensembling single-mode approximations typically enhances the models' generalization and calibration. Yet, a challenge arises when finetuning large transformer models. In such cases, "Bayes By Backprop" excels in accuracy, while SWAG achieves the best calibration.

more

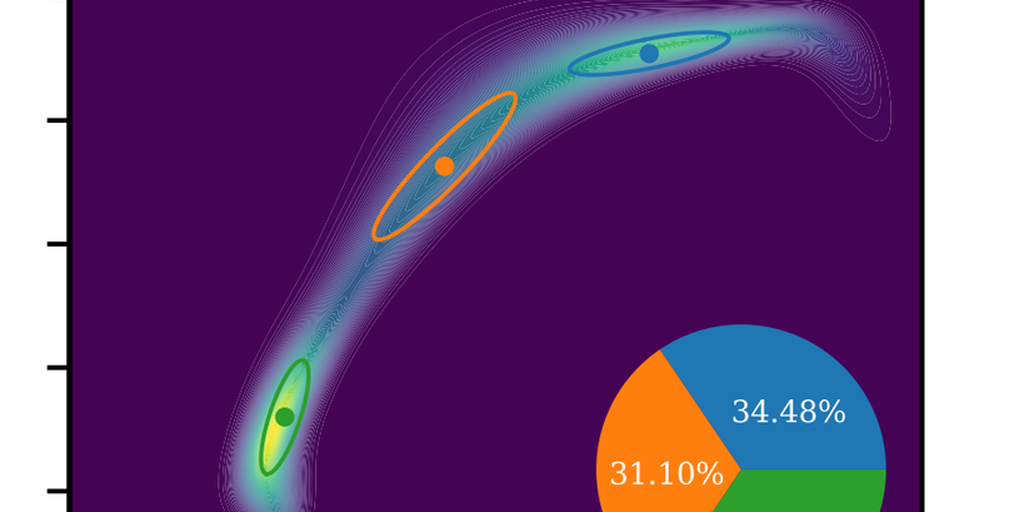

Variational inference with Gaussian mixture models (GMMs) enables learning of highly tractable yet multi-modal approximations of intractable target distributions with up to a few hundred dimensions. The two currently most eff ective methods for GMM-based variational inference, VIPS and iBayes-GMM, both employ independent natural gradient updates for the individual components and their weights. We identify several design choices that distinguish both approaches and test all possible combinations. We identify a new combination of algorithmic choices that yield more accurate solutions with less updates then previous methods.

more

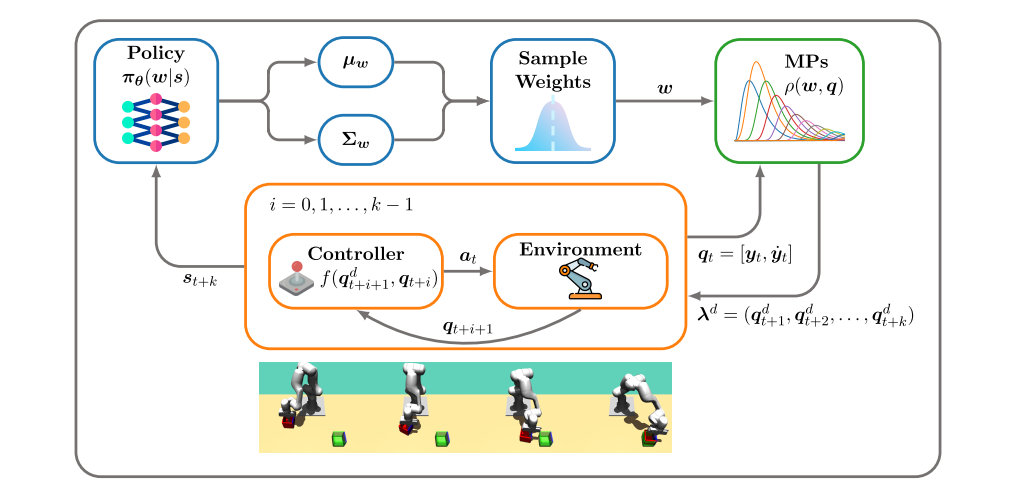

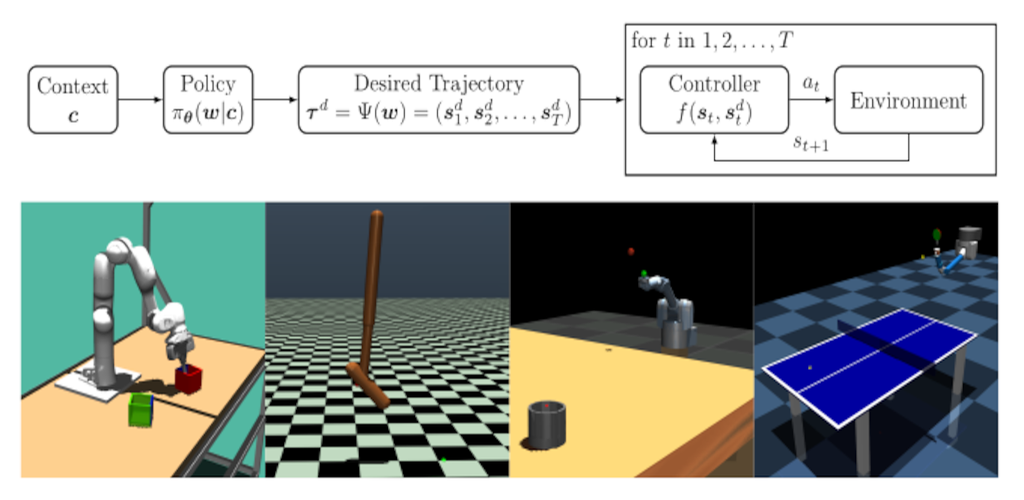

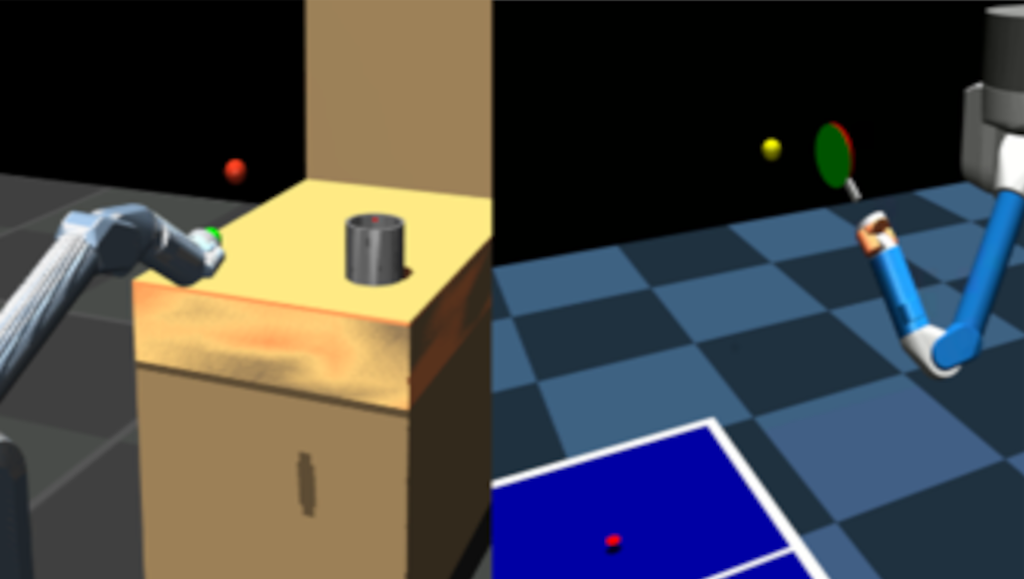

We introduce a novel deep reinforcement learning (RL) approach called Movement Primitive- based Planning Policy (MP3). By integrating movement primitives (MPs) into the deep RL framework, MP3 enables the generation of smooth trajectories throughout the whole learning process while effectively learning from sparse and non-Markovian rewards. Additionally, MP3 maintains the capability to adapt to changes in the environment during execution using the replanning capabilities of ProDMPs and outperforms other RL algorithms on competitive benchmark tasks.

more

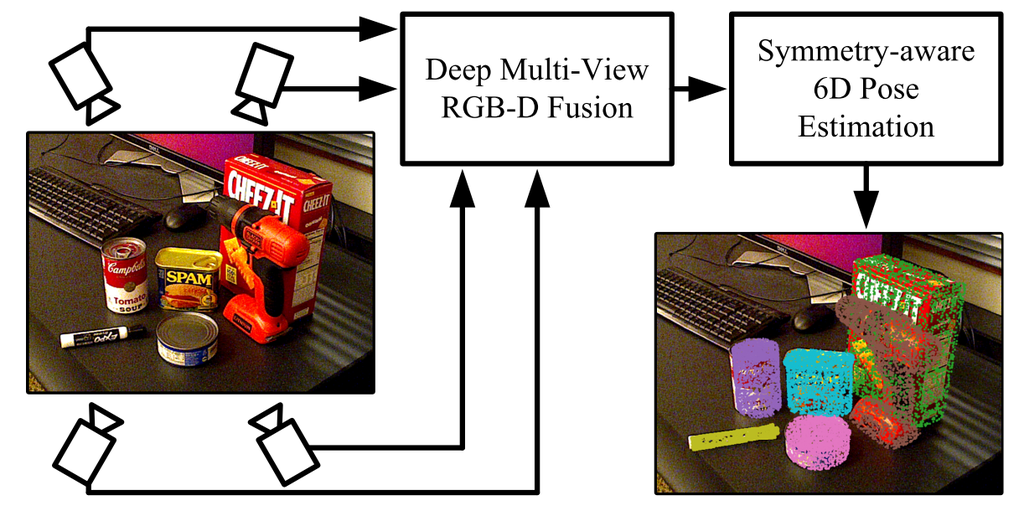

We propose a new 6D pose estimation algorithm that infers the pose of multiple objects in a scene from multiple views using pointcloud and rgb information. Our method is based on key-point detectors and multi-directional fusion of rgb and point-cloud data obtained from multiple views. The keypoint detectors are extended to also work for symetric objects. Our approach achieves unprecedented performance on several public benchmark datasets.

more

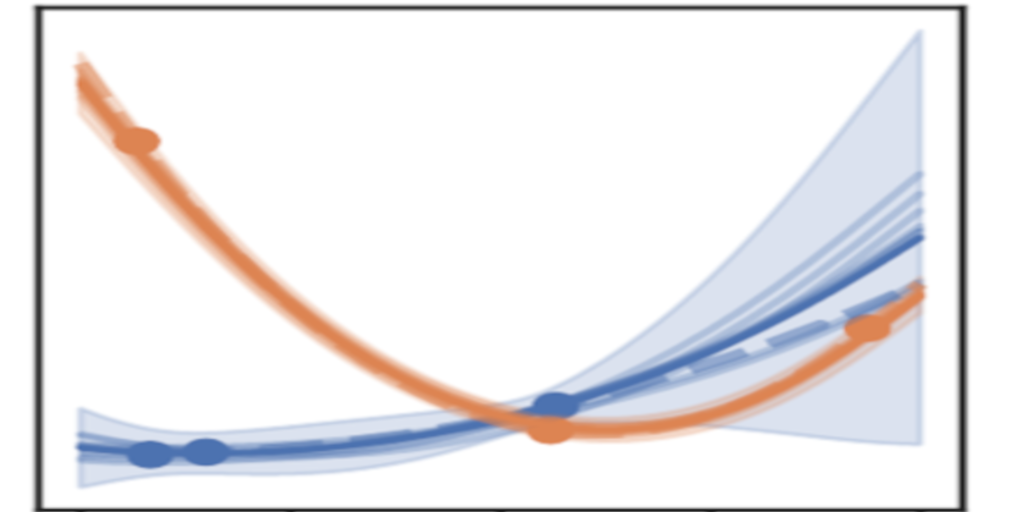

The Neural Process (NP) is a prominent deep neural network-based BML architecture, which has shown remarkable results in recent years. Prior work studies a range of architectural modifications to boost performance, such as attentive computation paths or improved context aggregation schemes, while the influence of the VI scheme remains under-explored. GMM-NP does not require complex architectural modifications, resulting in a powerful, yet conceptually simple BML model, which outperforms the state of the art on a range of challenging experiments, highlighting its applicability to settings where data is scarce.

more

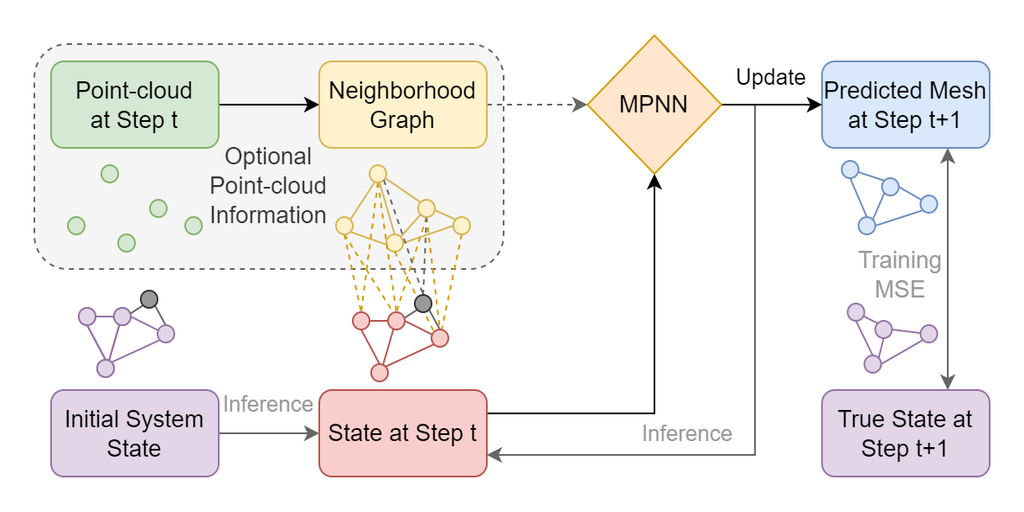

Physical simulations that accurately model reality are crucial for many engineering disciplines such as mechanical engineering and robotic motion planning. In recent years, learned Graph Network Simulators produced accurate mesh-based simulations while requiring only a fraction of the computational cost of traditional simulators.

more_rdax_98.png)

In this paper we propose a novel algorithm that extends Adversarial Imitation Learning to use preferences as a feedback besides demonstrations. Results show that our method can learn from expert and imperfect demonstrations. Experiments show the method's effectiveness on robotic ma benchmarks.

more

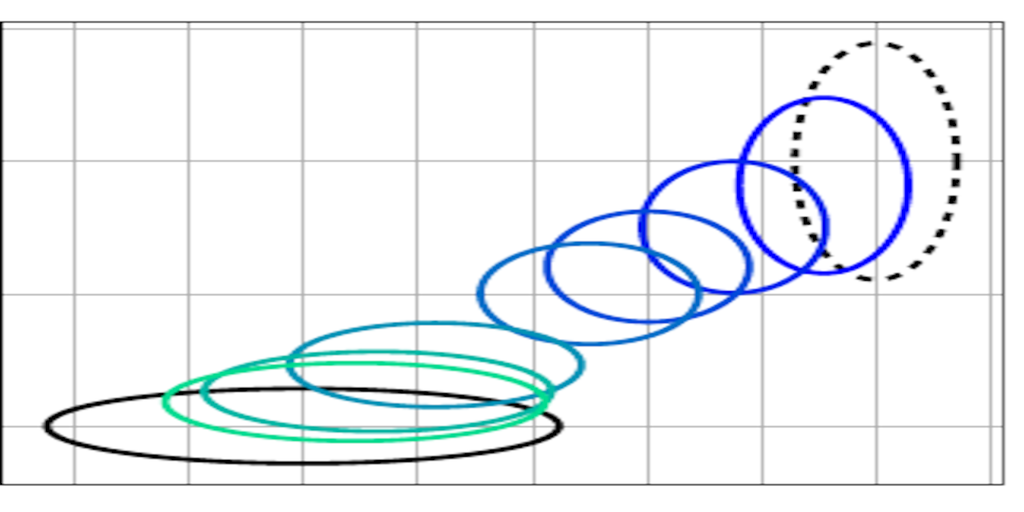

Movement Primitives (MPs) are a well-known concept to represent and generate modular trajectories. MPs can be broadly categorised into two types: (a) dynamics-based approaches that generate smooth trajectories from any initial state, e. g., Dynamic Movement Primitives (DMPs), and (b) probabilistic approaches that capture higher-order statistics of the motion, e. g., Probabilistic Movement Primitives (ProMPs).

more_neu_rdax_98.png)

We combine a mixture of movement primitives with a distribution matching objective to learn versatile behaviors that match the expert’s behavior and versatility.

more

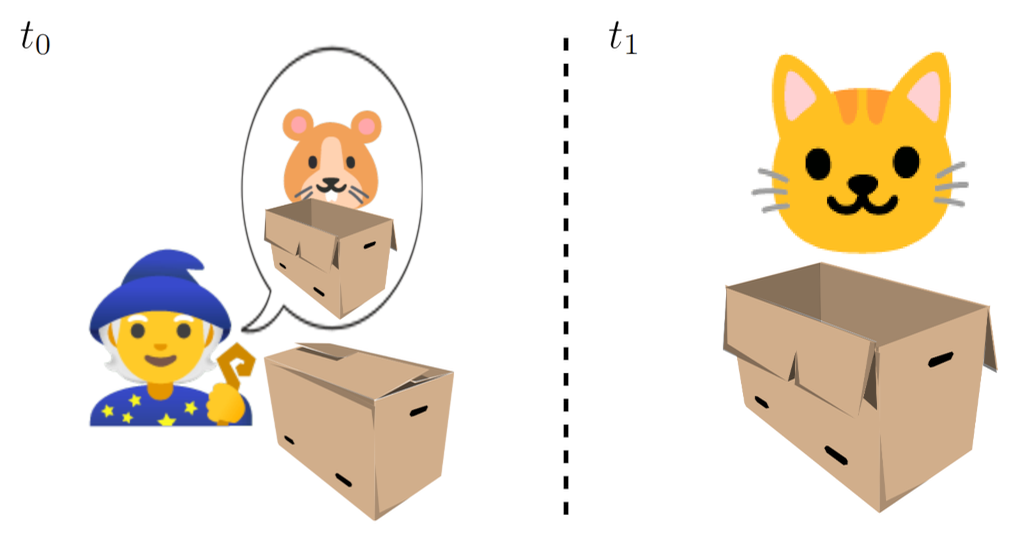

We study how recent State Space modeling approaches for Model-Based RL represent uncertainties. We find some flaws and propose a theoretically better-grounded alternative. We show it improves performance in tasks where it is important to appropriately capture uncertainty. If you want to know who this relates to the cat and the hamster you have to read the paper.

more

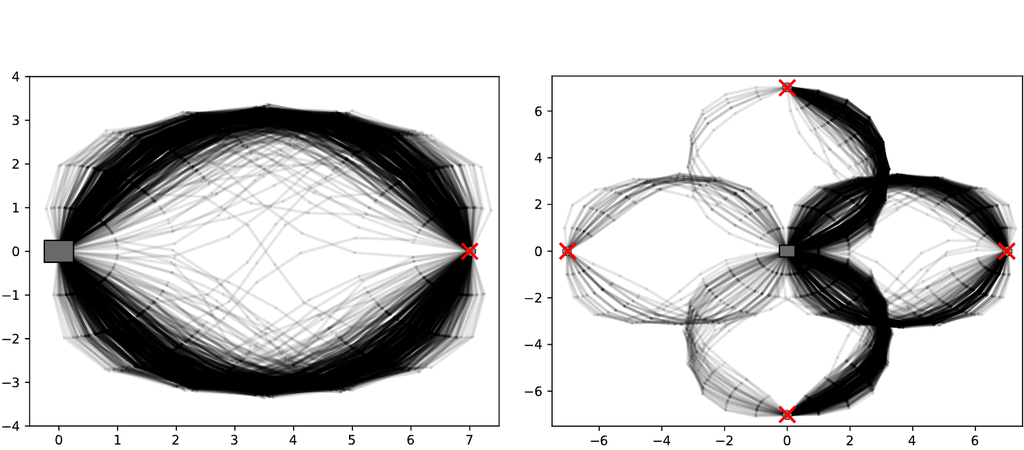

Episode-based reinforcement learning (ERL) algorithms treat reinforcement learning (RL) as a black-box optimization problem where we learn to select a parameter vector of a controller, often represented as a movement primitive, for a given task descriptor called a context.

more

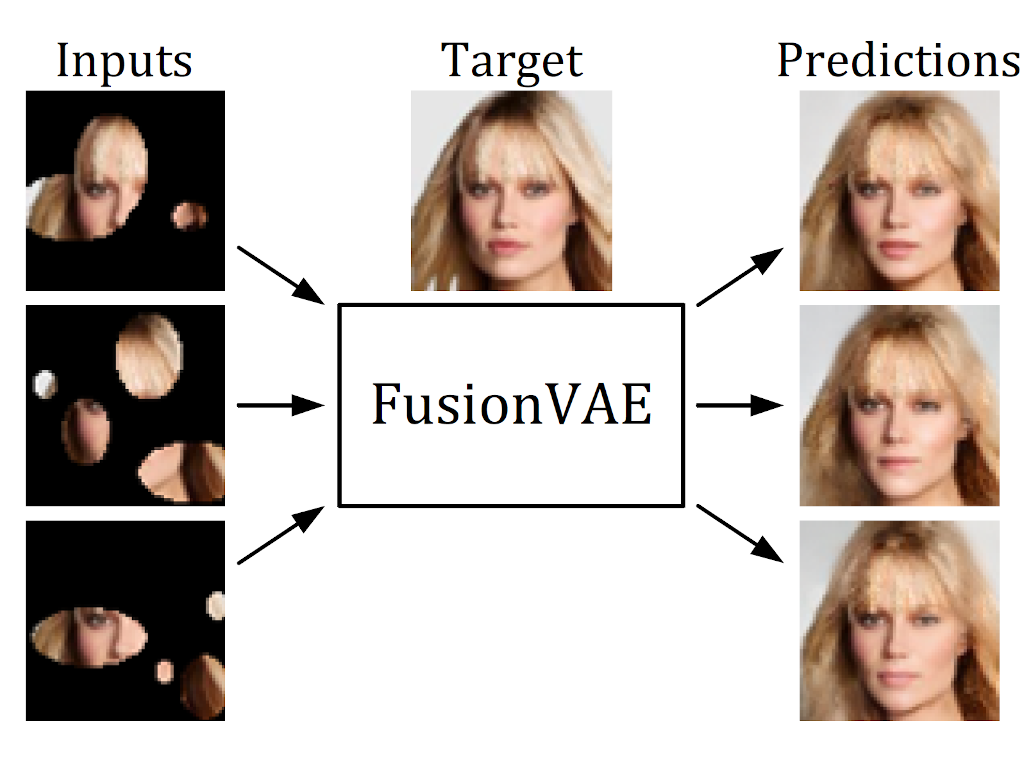

We present a novel deep hierarchical variational autoencoder that can serve as a basis for many fusion tasks. It can generate diverse image samples that are conditioned on multiple noisy, occluded, or only partially visible input images. We created three novel image fusion datasets and show that our method outperforms traditional approaches significantly.

more

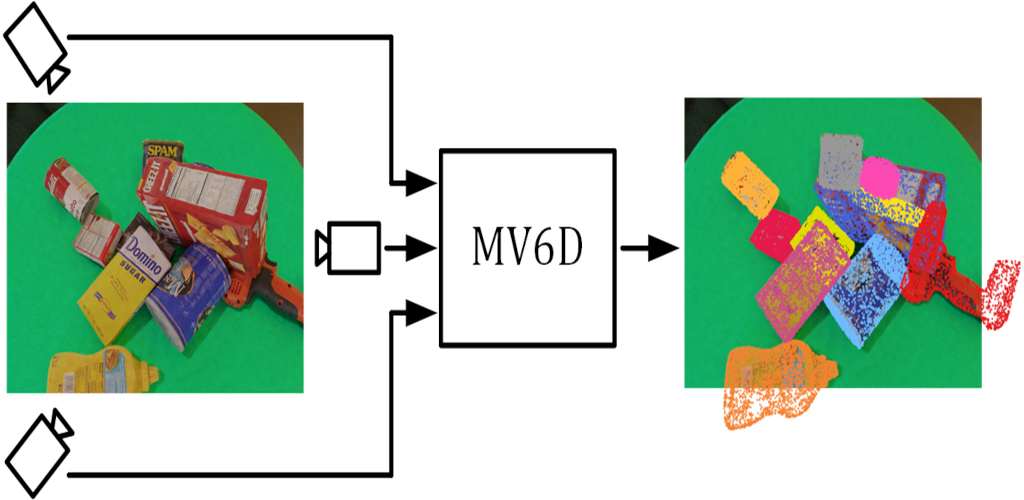

We present a novel deep learning method that estimates the 6D poses of all objects in a cluttered scene based on multiple RGB-D images. Our approach is considerably more accurate than previous approaches especially for very occluded objects and it is robust towards dynamic camera setups as well as inaccurate camera calibration.

more

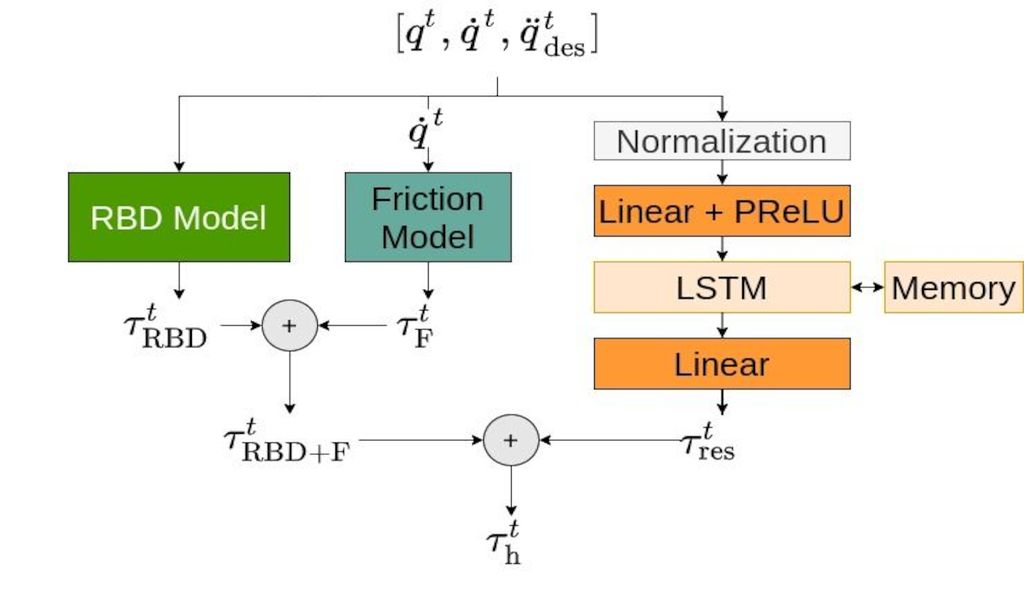

We propose a new formulation for a residual hybrid inverse dynamics model, which combines a fully physically consistent rigid-body dynamics model with a recurrent LSTM and a Coulomb friction function. The model is trained end-to-end using a new formulation of Barycentric Parameters called “Differentiable Barycentric”, which implicitly guarantees all conditions of physical consistency. In our real robot motion tracking experiments we show, that the new model is able to achieve compliant and precise motion tracking on unseen movements.

more

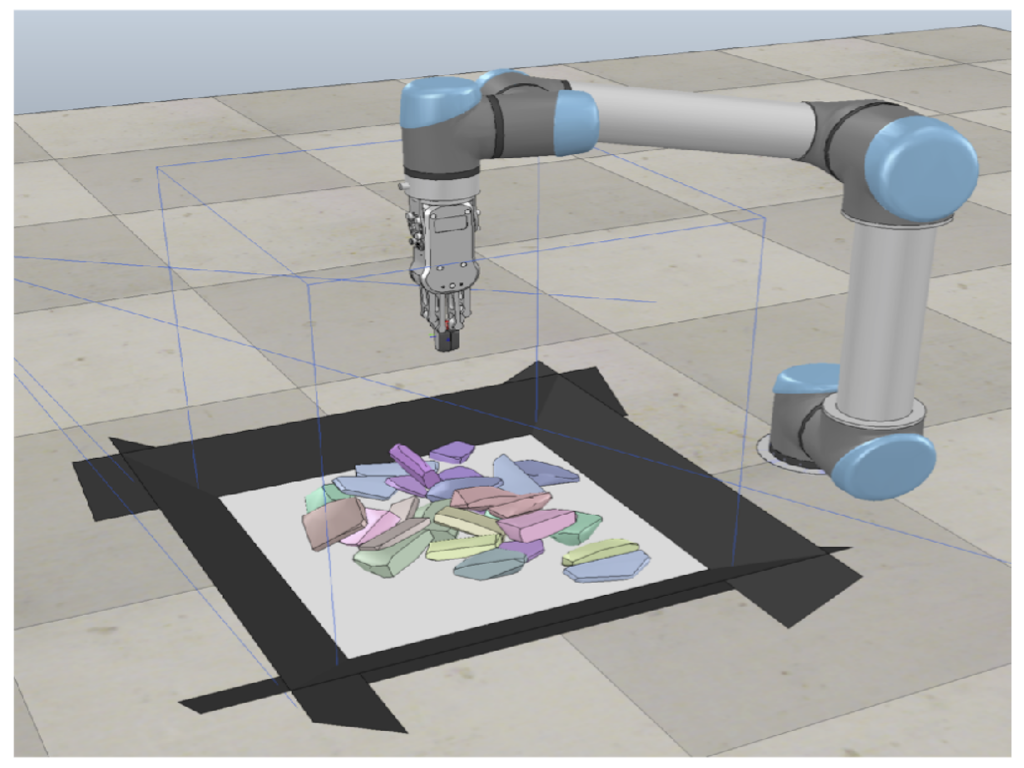

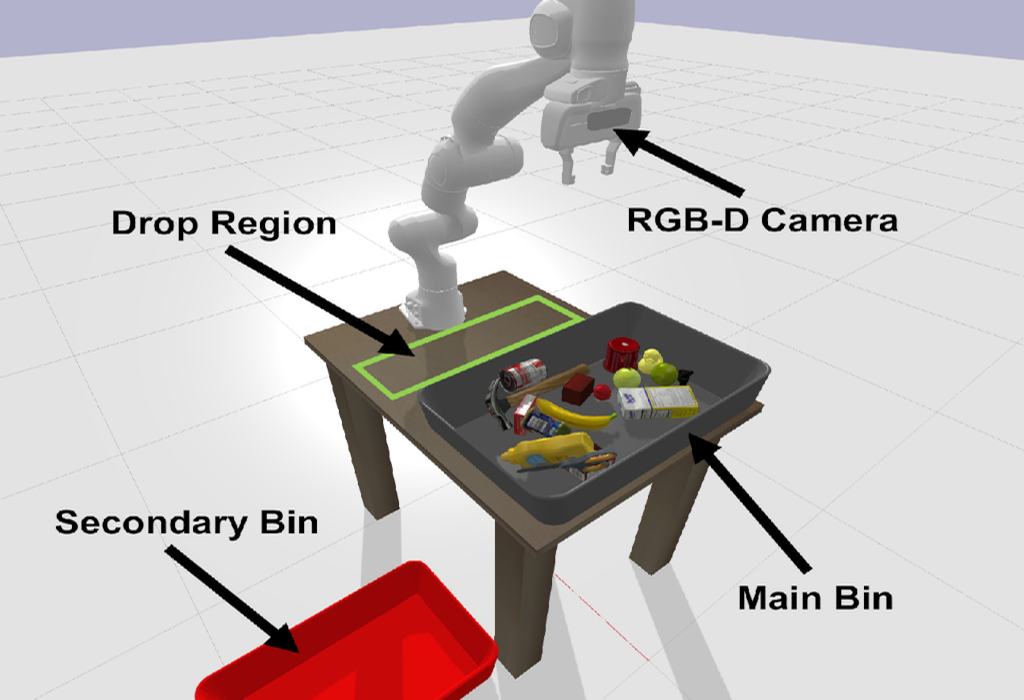

In this paper, we present a new approach for interactive scene segmentation using deep reinforcement learning. Our robot can learn to push objects in a heap such that semantic segmentation algorithms can detect every object in the heavily cluttered heap.

more

Mechanical Search (MS) is a framework for object retrieval, which uses a heuristic algorithm for pushing and rule-based algorithms for high-level planning. While rule-based policies profit from human intuition in how they work, they usually perform sub-optimally in many cases. We present am deep hierarchical reinforcement learning (RL) algorithm to perform this task, showing an increased search performance in terms of number of needed manipulation, success rate as well as computation time!

more

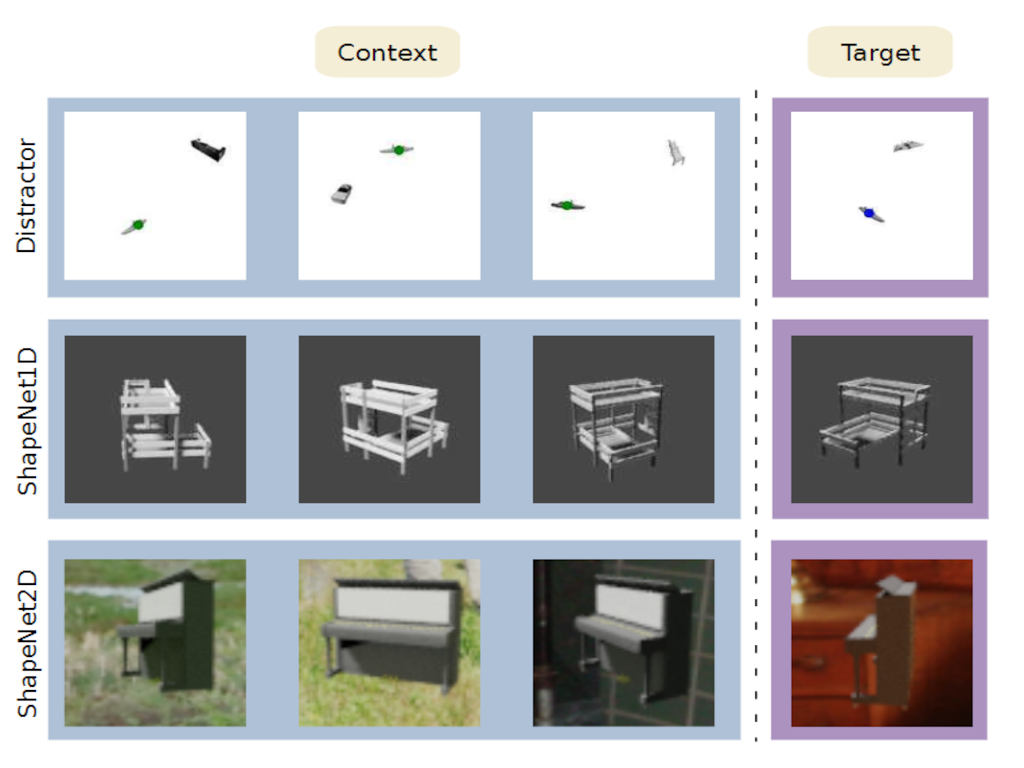

We design two new types of cross-category level vision regression tasks, namely object discovery and pose estimation, which are of unprecedented complexity in the meta-learning domain for computer vision with exhaustively evaluation of common meta-learning techniques to strengthen the generalization capability. Furthermore, we propose functional contrastive learning (FCL) over the task representations in Conditional Neural Processes (CNPs) and train in an end-to-end fashion.

more

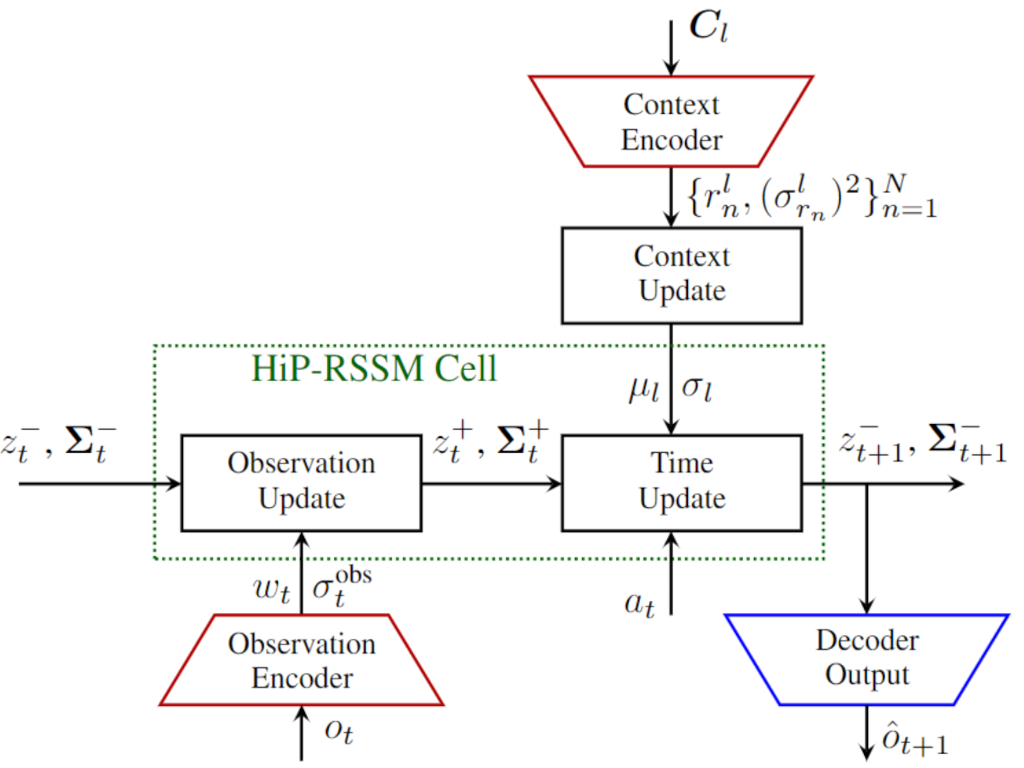

We propose a multi-task deep Kalman model, that can adapt to changing dynamics and environments. The model gives state of the art performance on several robotic benchmarks with non-stationarity with little computational overhead!!!

more

We propose a new method which enables robots to learn versatile and highly accurate skills in the contextual policy search setting by optimizing a mixture of experts model. We make use of Curriculum Learning, where the agent concentrates on local context regions it favors. Mathematical properties allow the algorithm to adjust the model complexity to find as many solutions as possible.

A video presenting our work can be found here.

more

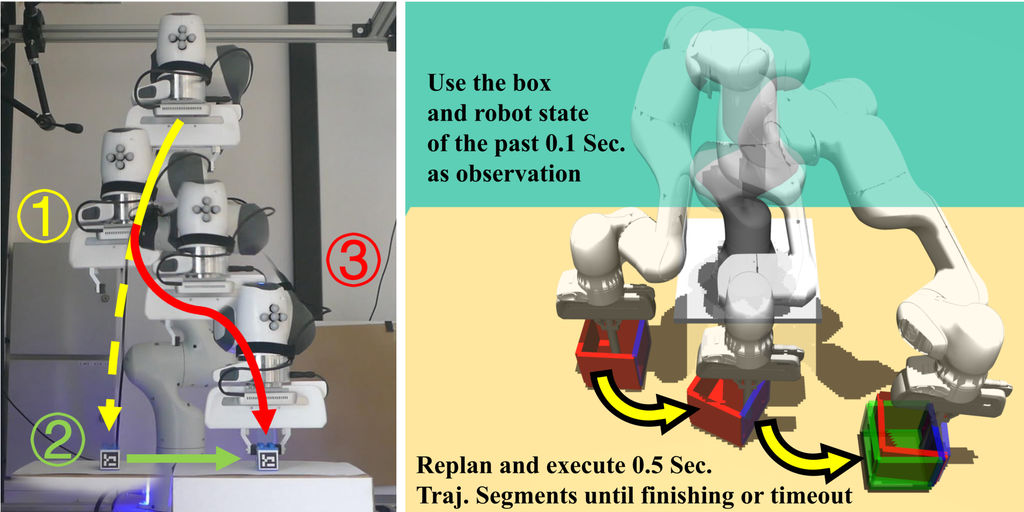

We developped a new residual reinforcement learning method that not just manipulated the output of a controller but also its input (e.g., the set-points). We applied this method to a real robot peg-in-the hole setup with a significant amount of position and orientation uncertainty.

more

Do you like Deep RL methods such as TRPO or PPO? Then you will also like this one! Our differentiable trust region layers can be used on top of any policy optimization algorithms such as policy gradients to obtain stable updates -- no approximations or implementation choices required :) Performance is enpar with PPO on simple exploration scenarios while we outperform PPO on more complex exploration environments.

Neural Processes are powerful tools for probabilistic meta-learning. Yet, they use rather basic aggregation methods, i.e. using a mean aggregator for the context, which does not give consistent uncertainty estimates and leads to poor prediction performance. Aggregating in a Bayesian way using Gaussian conditioning does a much better job !:)

Action conditional probabilistic model inspired by Kalman filter operations in the latent state. Find out how we learn the complex non-markovian dynamics of pneumatic soft robots and large hydraulic robots with this disentangled state and action representation.

more

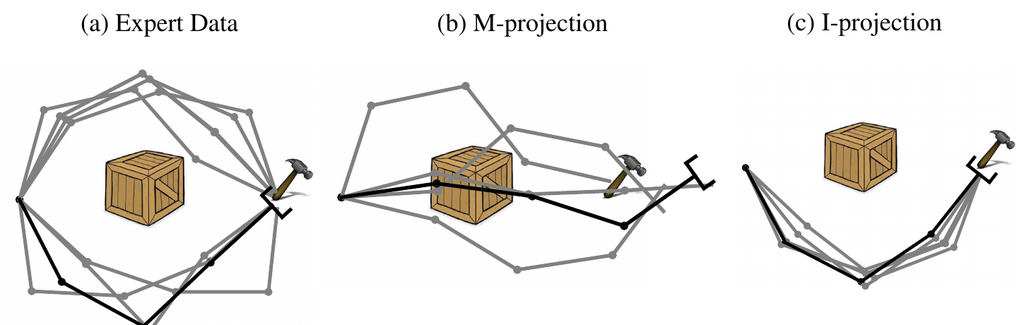

Many methods for machine learning rely on approximate inference from intractable probability distributions. Learning sufficiently accurate approximations requires a rich model family and careful exploration of the relevant modes of the target distribution...

more

Using the I-Projection for Mixture Density Estimation. Find out why maximum likelihood is not well suited for mixture density modelling and why you should use the I-projection instead.

more

The Autonomous Learning Robots (ALR) Lab was founded at Jan. 2020 at the KIT. The new group is now building up and looking forward to do exciting research and teaching!