Perception and Object Modelling

We are investigating new robot vision algorithms for scene understanding with a focus on 6D pose estimation and interactive segmentation. In terms of object modelling, we are working on graph-neural network simulators for learning the dynamics of deformable objects.

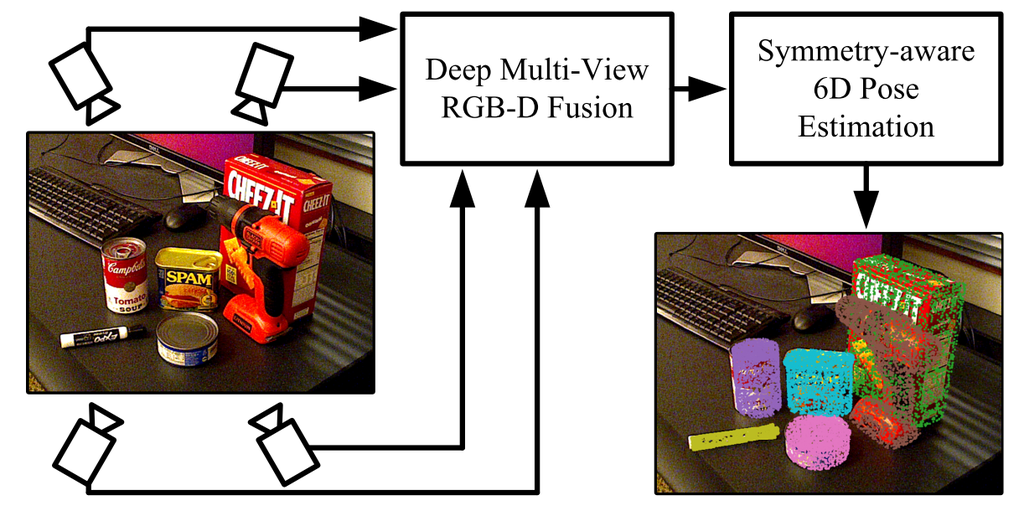

We propose a new 6D pose estimation algorithm that infers the pose of multiple objects in a scene from multiple views using pointcloud and rgb information. Our method is based on key-point detectors and multi-directional fusion of rgb and point-cloud data obtained from multiple views. The keypoint detectors are extended to also work for symetric objects. Our approach achieves unprecedented performance on several public benchmark datasets.

more

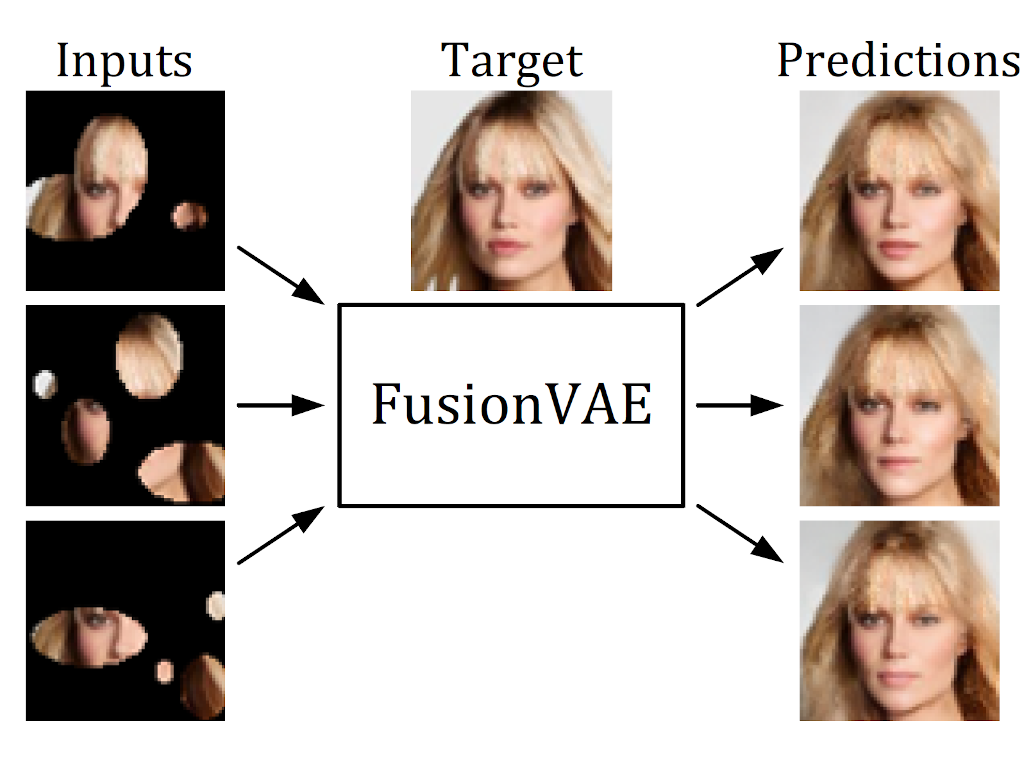

We present a novel deep hierarchical variational autoencoder that can serve as a basis for many fusion tasks. It can generate diverse image samples that are conditioned on multiple noisy, occluded, or only partially visible input images. We created three novel image fusion datasets and show that our method outperforms traditional approaches significantly.

more

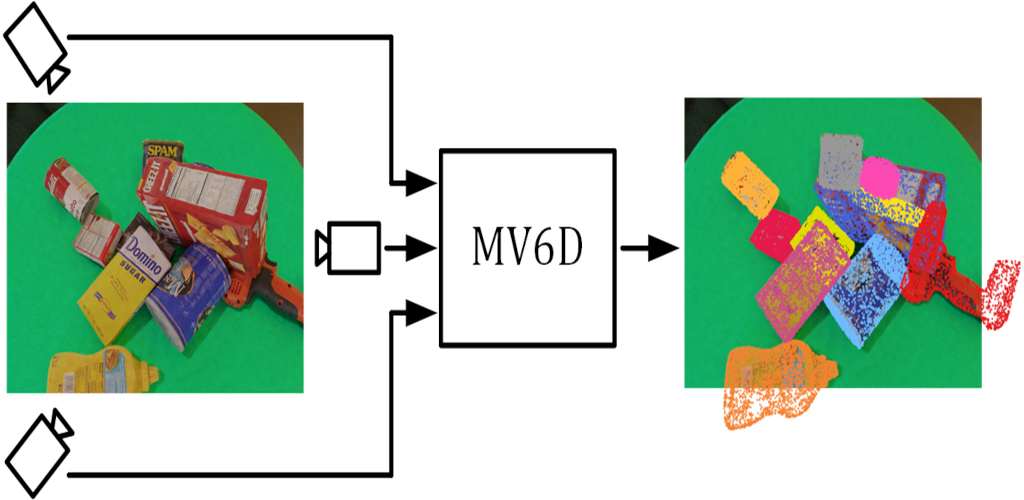

We present a novel deep learning method that estimates the 6D poses of all objects in a cluttered scene based on multiple RGB-D images. Our approach is considerably more accurate than previous approaches especially for very occluded objects and it is robust towards dynamic camera setups as well as inaccurate camera calibration.

more

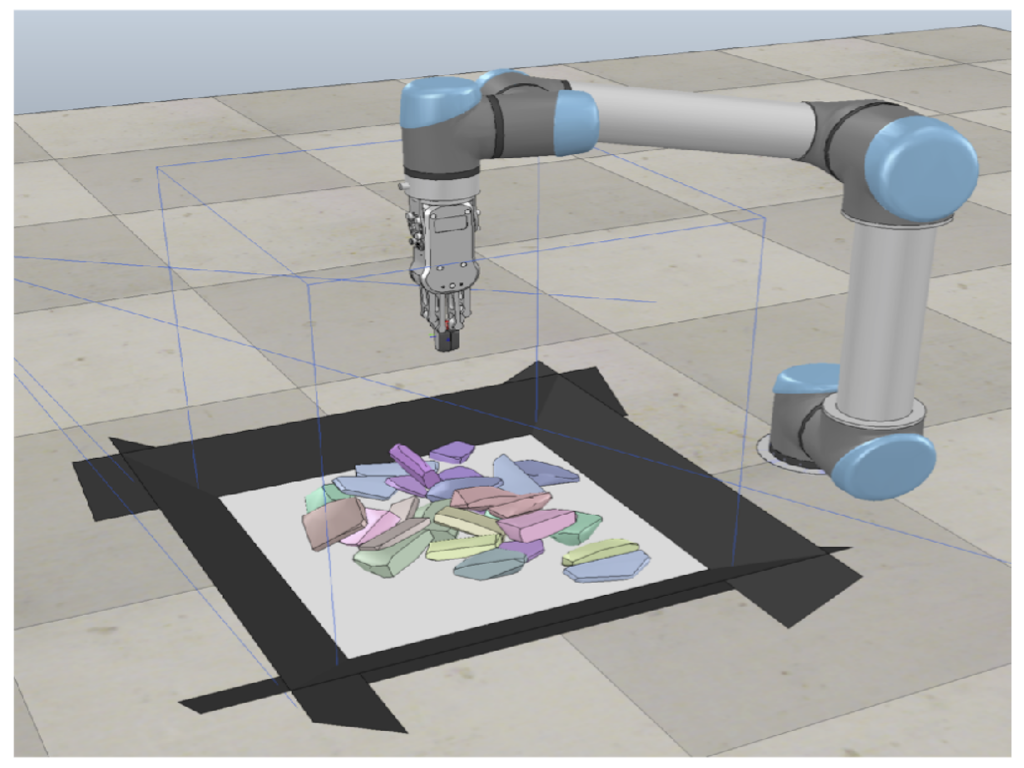

In this paper, we present a new approach for interactive scene segmentation using deep reinforcement learning. Our robot can learn to push objects in a heap such that semantic segmentation algorithms can detect every object in the heavily cluttered heap.

more